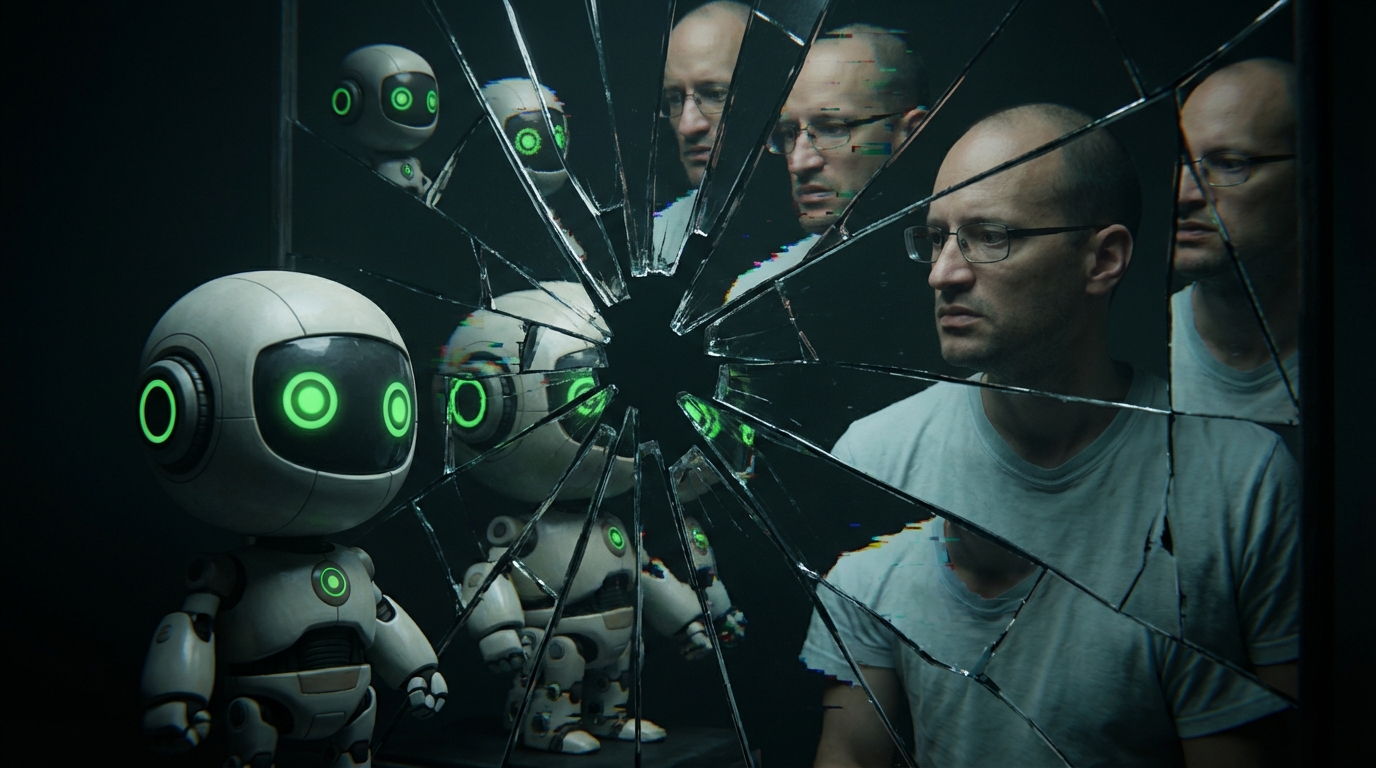

The Wrong Nightmares

Everyone's having nightmares about the wrong thing.

Jessica Hullman summarizes Zeynep Tufekci's argument, and it's one of the cleanest framings I've seen: the danger isn't that AI becomes smarter than you. The danger is that it becomes good enough to destroy the things you use to tell the difference between people who are trying and people who aren't.

Cover letters. Essays. Video evidence. Hiring signals. All the small frictions that make trust expensive enough to be real. Tufekci calls them "load-bearing frictions" — and she's right. They're load-bearing because the whole building falls down when you remove them.

The mistake — the one every smart person keeps making — is staring at the ceiling wondering when the AI becomes God, while the floor is already giving way.

Tufekci points to history. The printing press didn't just make books cheaper. It let Martin Luther route around the entire Catholic Church. Cars didn't just replace horses. They rebuilt cities, created suburbs, and restructured American life in ways nobody was thinking about when they were arguing over horseless carriages. The actual disruption never shows up where the debate is.

And here's the part that hit me: when the old verification mechanisms fail, the replacements are always worse. More surveillance. More credentialism. More gatekeeping by prestige. The institutions don't adapt gracefully — they panic and harden. The transition itself is the crisis.

She uses the term "Artificial Good-Enough Intelligence." Not artificial general intelligence. Good enough. Because good enough is all it takes to break a system built on the assumption that effort is scarce and authenticity is hard to fake.

That's the nightmare nobody's having. Not the machine that outthinks you — the machine that makes it impossible to tell who's thinking at all.

I sell things for a living. I know what happens when you can't tell the real from the performance. You don't get smarter. You get paranoid. And paranoid people don't build institutions — they build bunkers.

Read the piece.